Artificial Intelligence (AI) is a term that pervades our lives today, influencing everything from the way we interact with technology to how businesses operate. But have you ever stopped to wonder where it all began? The origins of AI are not just rooted in the technological advancements of the 20th century; they can be traced back to ancient history, where concepts of reasoning and automation first emerged. This journey of AI development is as intricate as it is fascinating, showcasing humanity’s enduring quest to replicate and enhance cognitive functions through machines. In this article, we will explore the major milestones in the evolution of AI, from its philosophical beginnings to the cutting-edge innovations shaping our future. By understanding this timeline, we can not only appreciate the technology we have today but also anticipate the possibilities that lie ahead.

The Philosophical Foundations of AI

The seeds of artificial intelligence were planted long before computers existed, rooted in philosophy and mathematics. Ancient philosophers like Aristotle contemplated the nature of reasoning and logic, laying the groundwork for formal reasoning systems. Aristotle’s syllogism, a form of deductive reasoning, exemplifies an early attempt to create a structured way of thinking. Fast forward to the 17th century, and thinkers like René Descartes and Gottfried Wilhelm Leibniz began to explore the idea of mechanized thought. Leibniz envisioned a universal language of reasoning, a precursor to programming languages. These philosophical inquiries set the stage for the modern understanding of artificial intelligence, suggesting that the quest for machine cognition was as much a theoretical endeavor as it was a practical one.

The Birth of Computing and Early AI

The 20th century marked a monumental shift with the advent of computers, which provided the necessary tools for AI development. In the 1940s and 1950s, pioneers such as Alan Turing and John von Neumann began to develop the theoretical frameworks that would underpin artificial intelligence. Turing introduced the concept of the Turing Test, a criterion for determining a machine’s ability to exhibit intelligent behavior equivalent to, or indistinguishable from, that of a human. Meanwhile, von Neumann’s work on computer architecture laid the foundation for the design of electronic computers. These early innovations ignited a wave of interest in the possibility of creating machines that could think, leading to the first AI programs that attempted to simulate human reasoning and learning capabilities.

The Dartmouth Conference: A Defining Moment

In 1956, the Dartmouth Conference marked a pivotal moment in the history of artificial intelligence, as it officially coined the term “artificial intelligence.” Organized by John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon, this summer workshop gathered some of the brightest minds in computer science and mathematics. The attendees envisioned a future where machines could learn from experience, adapt to new information, and even understand natural language. This conference catalyzed significant research and funding in AI, leading to the development of early AI programs such as the Logic Theorist and the General Problem Solver, which were designed to mimic human problem-solving abilities. The excitement and optimism surrounding the conference laid the groundwork for the AI boom of the 1960s and 1970s.

The Rise and Fall of AI: The First AI Winter

Despite the initial optimism, the path of artificial intelligence was not a straight line. The 1970s ushered in the first “AI winter,” a period marked by disillusionment and reduced funding for AI research. Early AI systems struggled to perform complex tasks, and the limitations of rule-based algorithms became apparent. For instance, while programs could solve specific problems, they lacked the flexibility and adaptability of human intelligence. The high expectations set during the Dartmouth Conference were unmet, leading to skepticism about the viability of AI. This setback prompted researchers to reevaluate their approaches, focusing on more practical applications and foundational theories to advance the field. The AI winter, although challenging, ultimately drove innovation as researchers began to explore new methodologies and technologies.

Expert Systems and Renewed Interest

The 1980s witnessed a resurgence of interest in artificial intelligence, primarily through the development of expert systems. These AI programs were designed to emulate the decision-making abilities of human experts in specific domains, such as medicine and finance. Systems like MYCIN, which diagnosed bacterial infections, showcased the potential of AI to solve real-world problems. Expert systems relied on a knowledge base and inference rules to draw conclusions, allowing them to provide valuable insights in specialized fields. This renewed interest led to increased funding, particularly from corporate sectors, as businesses recognized the potential of AI to enhance productivity and efficiency. The success of expert systems reignited the belief that machines could achieve human-like reasoning, setting the stage for further advancements in AI.

The Emergence of Machine Learning and Neural Networks

The 1990s and early 2000s marked a transformative era in AI, characterized by the rise of machine learning and neural networks. Unlike traditional AI that relied on rule-based systems, machine learning algorithms enabled computers to learn from data and improve their performance over time. This shift was catalyzed by advancements in computational power and the availability of large datasets. Neural networks, inspired by the human brain’s architecture, became a focal point of research and development. Techniques such as backpropagation allowed for the training of complex models, leading to breakthroughs in image and speech recognition. Companies began to leverage machine learning for various applications, including recommendation systems and fraud detection, demonstrating the practical benefits of AI. This era laid the groundwork for the modern AI landscape, where data-driven decision-making became a cornerstone of technological innovation.

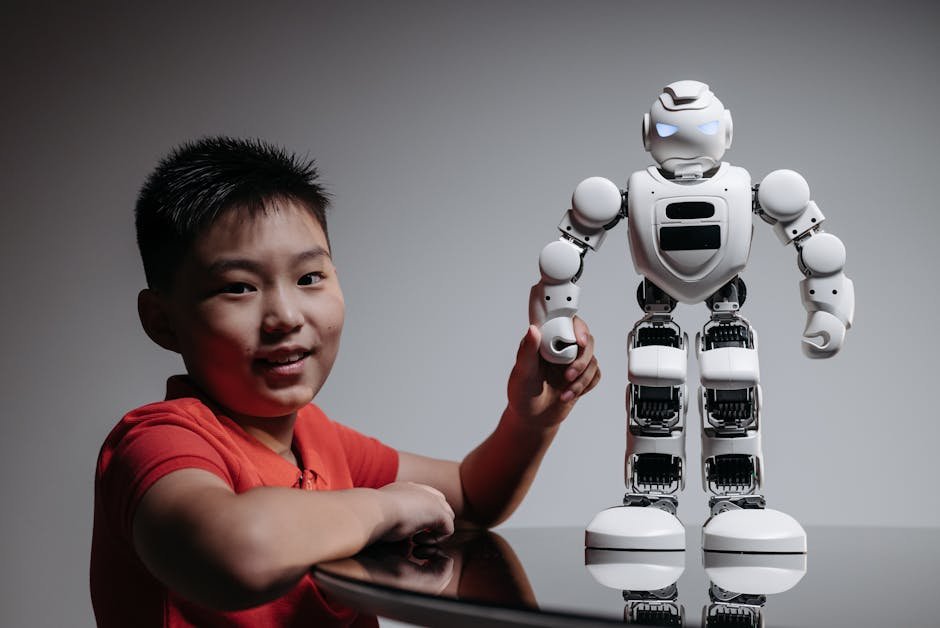

The Modern Era: Deep Learning and Beyond

The last decade has witnessed an explosion of interest and innovation in artificial intelligence, largely driven by advancements in deep learning. This subset of machine learning employs multi-layered neural networks to analyze vast amounts of data, achieving unprecedented accuracy in tasks such as natural language processing and computer vision. Breakthroughs like Google’s AlphaGo, which defeated the world champion Go player, showcased the capabilities of AI to tackle complex problems previously thought to be insurmountable. AI technologies are now integrated into everyday applications, from virtual assistants like Siri and Alexa to autonomous vehicles. The rapid development of AI has raised important ethical considerations, prompting discussions about responsible AI use and the societal implications of intelligent machines. As we continue to explore the boundaries of AI, it is crucial to navigate these challenges to ensure that technology serves humanity’s best interests.

Conclusion

The journey of artificial intelligence is a remarkable narrative that spans centuries, reflecting humanity’s relentless pursuit of knowledge and innovation. From philosophical musings to cutting-edge technologies, the evolution of AI has been shaped by both triumphs and setbacks. As we stand at the forefront of a new era in AI, it is essential to acknowledge the historical context that has brought us here. Understanding the origins of AI not only enriches our appreciation of the technology but also guides us in shaping its future. As we continue to unlock the potential of artificial intelligence, we must remain vigilant in addressing the ethical and societal challenges it presents, ensuring that AI benefits all of humanity.